Reviewers: COLOSSUS is up on NetGalley. Here’s the link to the page.

The Feb. 15 issue of Booklist will have a great review. Sneak peak: “Leslie’s latest (after The Between, 2021) is a spine-tingling sf novel certain to wow readers who want to explore sentient AI, parallel universes, paranoia, and sustaining human consciousness for generations to come.”

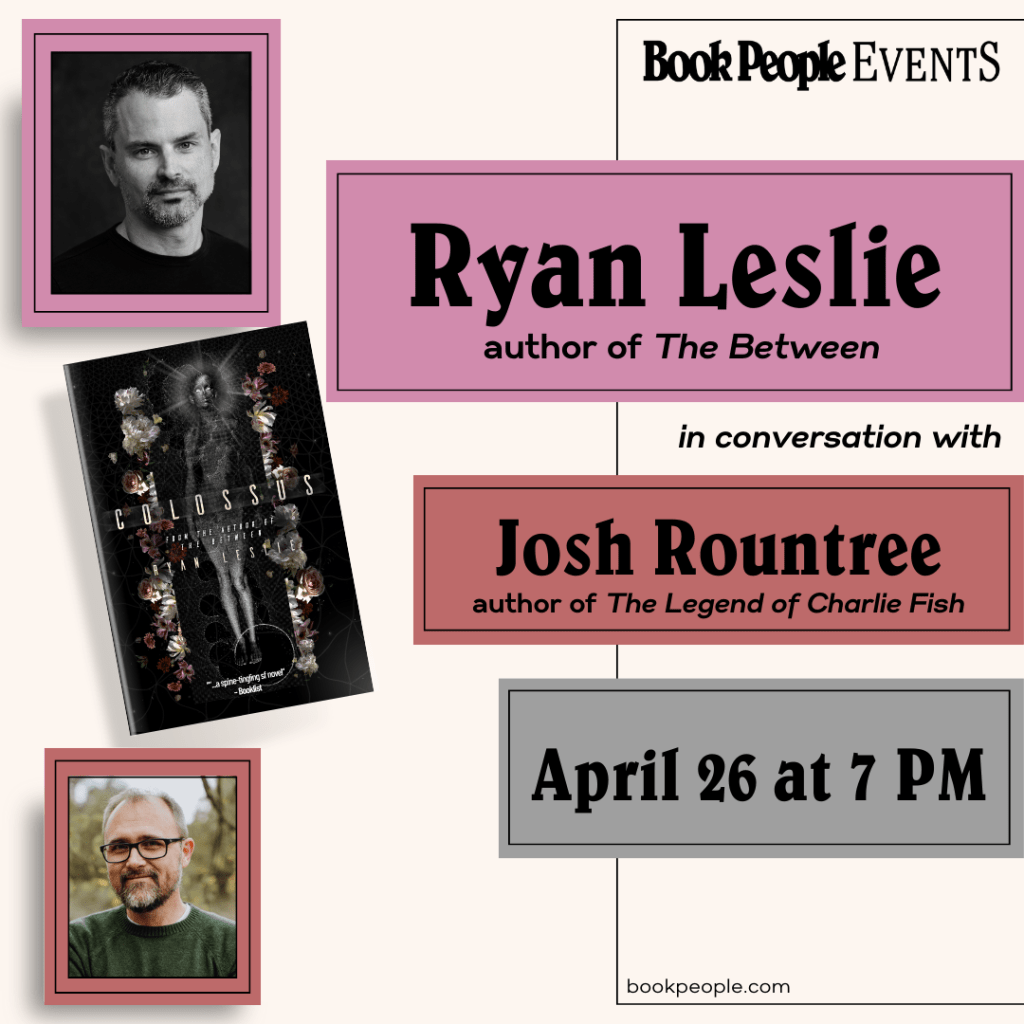

If you live in Austin and would like to come to the launch event in late March / early April, keep watching this space. Some details are figured out. More to come very soon!

And for those waiting for the sequel to THE BETWEEN… I have news! I sent the manuscript to the editor this past weekend. So, it is written and on the path to being in the hands of readers. As the publishing timeline firms up, I’ll provide updates.